For the Last Time: Temporal Sensitivity and Perceived Timing of the Final Stimulus in an Isochronous Sequence

Abstract

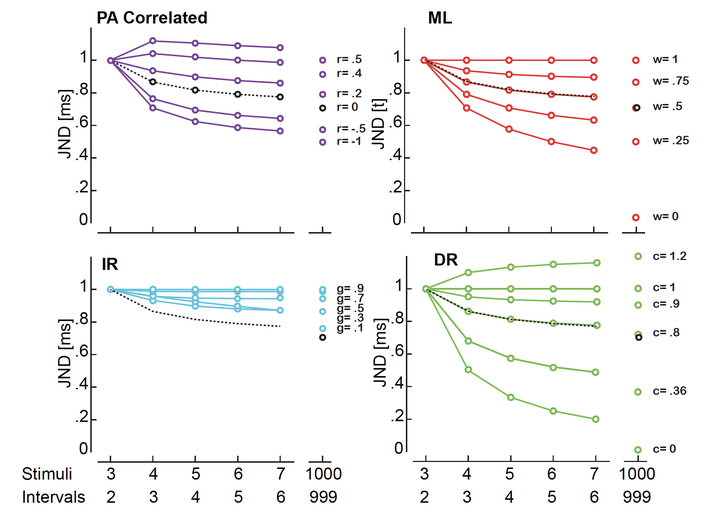

An isochronous sequence is a series of repeating events with the same inter-onset-interval. A common finding is that as the length of a sequence increases, so does temporal sensitivity to irregularities - that is, the detection of deviations from isochrony is better with a longer sequence. Several theoretical accounts exist in the literature as to how the brain processes sequences for the detection of irregularities, yet there remains to be a systematic comparison of the predictions that such accounts make. To compare the predictions of these accounts, we asked participants to report whether the last stimulus of a regularly-timed sequence appeared ‘earlier’ or ‘later’ than expected. Such task allowed us to separately analyse bias and performance. Sequences lengths (3, 4, 5 or 6 beeps) were either randomly interleaved or presented in separate blocks. We replicate previous findings showing that temporal sensitivity increases with longer sequence in the interleaved condition but not in the blocked condition (where performance is higher overall). Results also indicate that there is a consistent bias in reporting whether the last stimulus is isochronous (irrespectively of how many stimuli the sequence is composed of). Such result is consistent with a perceptual acceleration of stimuli embedded in isochronous sequences. From the comparison of the models' predictions we determine that the improvement in sensitivity is best captured by an averaging of successive estimates, but with an element that limits performance improvement below statistical optimality. None of the models considered, however, provides an exhaustive explanation for the pattern of results found.