Abstract

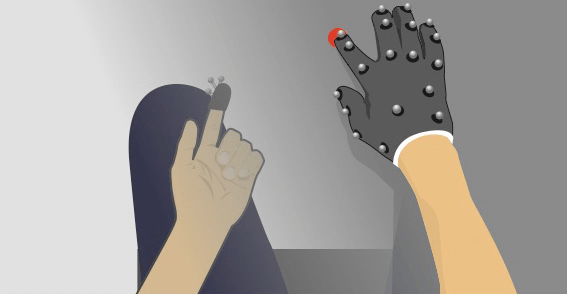

It has been suggested that the integration of multiple body-related sources of information within the peri-personal space (PPS) scaffolds body ownership. However, a normative computational framework detailing the functional role of PPS is still missing. Here we cast PPS as a visuo-proprioceptive Bayesian inference problem whereby objects we see in our environment are more likely to engender sensations as they come near to the body. We propose that PPS is the reflection of such an increased a priori probability of visuo-proprioceptive coupling that surrounds the body. To test this prediction, we immersed participants in a highly realistic virtual reality (VR) simulation of their right arm and surrounding environment. We asked participants to perform target-directed reaches toward visual, proprioceptive, and visuo-proprioceptive targets while visually displaying their reaching arm (body visible condition) or not (body invisible condition). Reach end-points are analyzed in light of the coupling prior framework, where the extension of PPS is taken to be represented by the spatial dispersion of the coupling prior between visual and proprioceptive estimates of arm location. Results demonstrate that if the body is not visible, the spatial dispersion of the visuo-proprioceptive coupling relaxes, whereas the strength of coupling remains stable. By demonstrating a distance-dependent alteration in visual and proprioceptive localization attractive pull toward one another (stronger pull at small spatial discrepancies) when the body is rendered invisible – an effect that is well accounted for by the visuo-proprioceptive coupling prior – the results suggest that the visible body grounds visuo-proprioceptive coupling preferentially in the near vs. far space.