Abstract

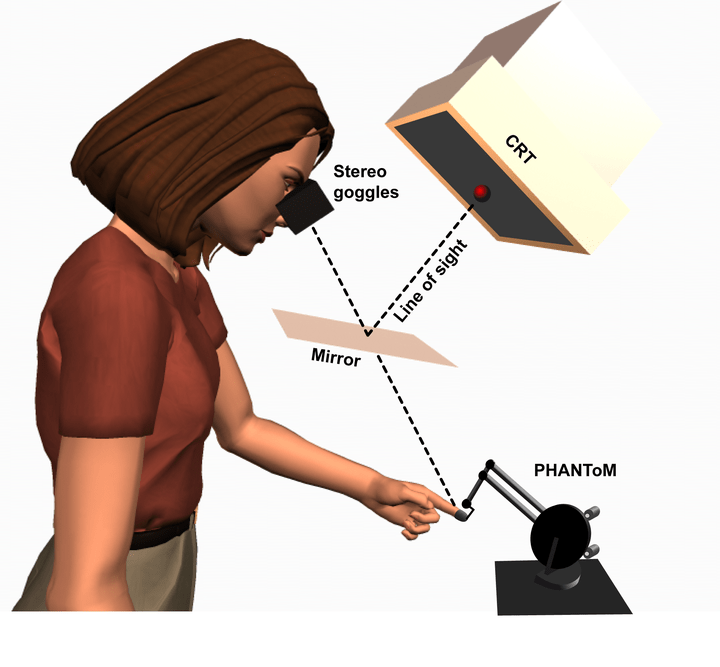

Perception is fundamentally underconstrained because different combinations of object properties can generate the same sensory information. To disambiguate sensory information into estimates of scene properties, our brains incorporate prior knowledge and additional "auxiliary" (i.e., not directly relevant to desired scene property) sensory information to constrain perceptual interpretations. For example, knowing the distance to an object helps in perceiving its size. The literature contains few demonstrations of the use of prior knowledge and auxiliary information in combined visual and haptic disambiguation and almost no examination of haptic disambiguation of vision beyond "bistable" stimuli. Previous studies have reported humans integrate multiple unambiguous sensations to perceive single, continuous object properties, like size or position. Here we test whether humans use visual and haptic information, individually and jointly, to disambiguate size from distance. We presented participants with a ball moving in depth with a changing diameter. Because no unambiguous distance information is available under monocular viewing, participants rely on prior assumptions about the ball’s distance to disambiguate their -size percept. Presenting auxiliary binocular and/or haptic distance information augments participants' prior distance assumptions and improves their size judgment accuracy-though binocular cues were trusted more than haptic. Our results suggest both visual and haptic distance information disambiguate size perception, and we interpret these results in the context of probabilistic perceptual reasoning.