Object shape and material

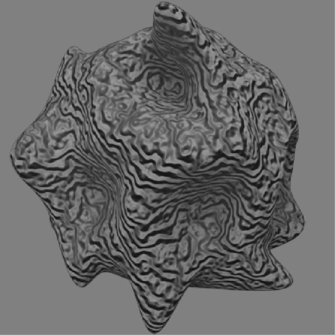

The perception of 3D shape is obtained through a complex interplay between local analysis and contextual information. For example, when we observe an object we integrate multiple sources of depth information, called cues. Such cues are not always perfectly aligned. With conflicting information the brain needs to use additional information and knowledge to solve the discrepancy. For example, with conflicting stereoscopic and motion information about a moving dot, the brain cannot know which source to “trust”. In such a case, the movement of dots in the background changes how much stereo and motion information can be trusted, so that the perceived depth of the dot changes depending on velocity of surrounding dots (Di Luca, Domini, Caudek, 2007). In my undergraduate thesis I investigated the perceptual distortion of the 3D shape by contextual dynamic illumination (Caudek, Domini, Di Luca 2004a). In a similar way as with dot movement, here the perception of shape seems to be influenced by the illumination direction and movement of the light source could be confused for loosely-rigid changes in the orientation of the shape. In a similar way, there is a tendency to perceive 3D structures as loosely rigid when integrating local distortions of random dots. Here the distributed local analysis across the surface of objects is not performed in isolation, but by incorporating a loose rigidity constraint (Di Luca, Domini, Caudek, 2004). What might appear to be counterintuitive is that local information and global constraints might have different effects on the perception of geometric properties like depth, slant, or curvature. As a result, despite our perception of shape appears congruent and integrated, measuring the perception of geometric properties taken in isolation could lead to an apparent incongruence of perceptual judgments (Di Luca, Domini, Caudek, 2010). 3D shape is not only perceived through visual information. For example, the shape and position of objects could be specified by haptic information in addition to visual information - i.e. when grasping an object while looking at it. In such multisensory interactions, the brain attempts to give an integrated interpretation to the signals available so to explain away all information (Battaglia, Di Luca, Ernst, et al. 2010). In a similar way, the perception of the material an object depends both on local and global information. For example, although highlights on a shiny object are located on small patches (most likely near areas of high curvature), seeing an object as being gloss requires a global pattern of highlights and shading which is analyzed by a specific network of mid-level visual brain areas that includes the posterior fusiform sulcus (pFs) and the area V3B/KO (Sun, Ban, Di Luca, & Welchman, 2014).